This article is about Machine Learning

How to do Data Analysis using Python

By NIIT Editorial

Published on 12/07/2021

8 minutes

Data Analysis is the way of cleaning, deciphering, analyzing and imagining data to find significant experiences that drive more astute and more powerful business choices.

The term Data Analytics is frequently utilized in business, which is the science that encompasses the entire interaction of data management. The data analytics incorporate not only the data analysis itself but also the data assortment, organization, stockpiling, tools and strategies used to profoundly jump into data and convey the outcomes ‒ for instance, data visualization tools. Data Analysis, then again, centres around transforming raw information into helpful data, statistics and explanation. To learn about data analysis using python in detail you can go to NIIT.

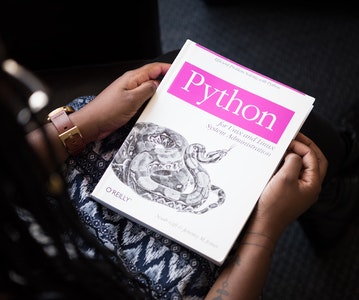

Python Library for Data Analysis

Python is a useful programming language, which means it is very well utilized in the advancement of both web and desktop applications. Additionally, it is helpful in the advancement of complex numeric and logical applications. A wide assortment of other Python libraries is accessible out there. There are two major libraries, NumPy and Pandas, that help the data analysts to do their functions.

NumPy

Numpy furnishes the python designers with many tools to deal with heaps of data in a relatively simpler manner. One of the most prominent data structures given by NumPy is 'ndarray'. It's the array that is ordered numerically like a standard python array (lists and tuples), but it isn’t like a heterogeneous array like the python list or tuple. Apart from this, it has certain attributes that give us an understanding of what the ndarray contains.

The attributes include shape, dtype, offset, order, buffer and strides.

To make NumPy 'ndarray', we have three choices, contingent upon what kind of ndarray we are attempting to make. These choices are "array", "zeros", and "empty". There is additionally a low-level constructor called "ndarray", with which we can make ndarray. Check out the below model:

|

Ndarray has many strategies. However, before we get into them, let us look at the 'shape' attribute.

The 'ndarray' is an n-dimensional array, and the attribute 'shape' characterizes the length of these dimensions. The 'shape' attribute is a tuple of numbers. For instance, an array of one dimension (a list) will have a solitary component in the tuple that characterizes the length of the list. A 2D array will have two components in the shape tuple and so on. For example:

Another significant function of ndarray is 'reshape()'. As the name suggests, it is a method of altering the shape given to a data structure (ndarray for this situation). However, reshaping the array in place will fail to make a duplicate. The boundaries passed to the reshape() function are as per the following:

Kindly note that, however, reshaping the array in place will fail to make a duplicate. The boundaries passed to the reshape() function are as per the following:

- Array-like object (could be ndarray, or a typical python list). This is a necessary parameter.

- Integer, or a tuple of integers– This will be utilized to reshape the main contention.

- The Order could be any of 'C', 'F' and 'A'. 'C' signifies to peruse and compose the array in a C like indexing order (which implies that the peripheral record changes the quickest and the deepest changes slowest). 'F' represents a Fortran-like order, which is the opposite of 'C' ordering. 'A' means to peruse/compose the components of the array in the primary contention if it is adjoining in memory in a Fortran like manner and C like manner in any case. This is a discretionary parameter.

During the analysis of the data, you will generally get the data as a string. So how would we change that string over to ndarray so we can do the NumPy operation on it? The appropriate response is 'genfromtxt()'.

"genfromtxt()" fundamentally runs a few loops. The main loop changes over each line in a file into the sequence of strings. The subsequent loop changes all the strings over a proper data type. The function is fundamentally slower than other single loop partners (like "loadtxt()", however, it is adaptable and can deal with missing data that cannot be done with loadtxt().

For example:

The contention 'delimiter' decides how the lines are to be parted. For example, a CSV document will determine the ',' character as the delimiter, while a TSV will indicate '\t' as the delimiter. But by default, if the delimiter isn't indicated, the whitespace characters are viewed as the delimiter.

Pandas

The "pandas" module is explicitly valuable for processing and discovering patterns in data, and subsequently, it is the most helpful tool for any data researcher/engineer.

Pandas give the data researcher/analyst two extraordinary and valuable data structures named "series" and "dataframe". An "arrangement" is only a marked section of data that can hold any data type (int, glide, string, objects, and so forth). A pandas "series" can be made utilizing the constructor call:

pandas.Series(data, index, dtype, copy)

The contention "data" is a list of data components (for the most part passed as a numpy ndarray). "index" is a one of a kind hashable list with a similar length as the "data" argument. "dtype" characterizes the data type, whereas “Series” is a homogeneous assortment of components, and "copy" indicates if a copy banner is set. By default, this is false.

The code snippet creates a Series:

And the output:

Pandas works by placing the input data in a data structure called "Dataframe". So if you give pandas a CSV file, it will create a dataframe with the information passed to it and that will permit you to do whatever tasks you need to do to the data.

Assume we have the dictionary containing some students in first, second, third and fourth years:

Let's pass this to the dataframe constructor:

The output would be:

maths physics chemistry

first 80 71 72

second 74 73 84

third 99 82 91

fourth 72 89 70

Along these lines, each key-value pair addresses a segment in the dataframe.

We will perceive how we can get to specific components. However, before that, how about we mess with the information, so that we can comprehend it, which will help us settle on choices regarding what techniques are used to analyze it.

df.head(2)

This will print the initial two lines of the dataset. In the little example of data we are utilizing, it may appear to be aimless to utilize this function, but if you manage data in the order of thousands of rows (which is very conceivable in a realistic problem), this function can show you how the information lies. Essentially, you may do a tail() function to approach/call the data.

df.tail(2)

This shows the last two columns of data. To check the number of lines and sections your data has, you might need to utilize the 'shape' attribute.

df.shape

Assuming you need to drop copies from your data, you may utilize the drop_duplicates() function on your data. This function will drop all the duplicate values and return the duplicate free dataframe.

df1= df.drop_duplicates()

Another function that you may have to utilize often is 'info()'. This function gives the client a fair idea of what sort of data is there in the dataset. For example, applying this to our dataset gives the accompanying output:

|

Let’s perceive how we can get to a specific value in the pandas dataframe. To recover a line, we can utilize 'loc'. For instance, df.loc['third'] shows the following:

|

Note: Pandas and NumPy are large modules and are full of very useful tools. We have briefly discussed how NumPy and Pandas can be used in data analysis.

Conclusion

Data Analysis is a process of interpreting, analyzing and visualizing data, and its tools can be used to extract essential information from the business data to make the data analysis process much easier. At the same time, Python is an important part of the data analyst's tool stash, as it is customized for completing repetitive tasks and manipulating the data. A wide assortment of Python libraries is accessible out there. These libraries, such as NumPy, Pandas, help the data analysts to complete their functions.

Advanced PGP in Data Science and Machine Learning (Full Time)

Become an industry-ready StackRoute Certified Data Science professional through immersive learning of Data Analysis and Visualization, ML models, Forecasting & Predicting Models, NLP, Deep Learning and more with this Job-Assured Program with a minimum CTC of ₹5LPA*.

Job Assured Program*

Practitioner Designed